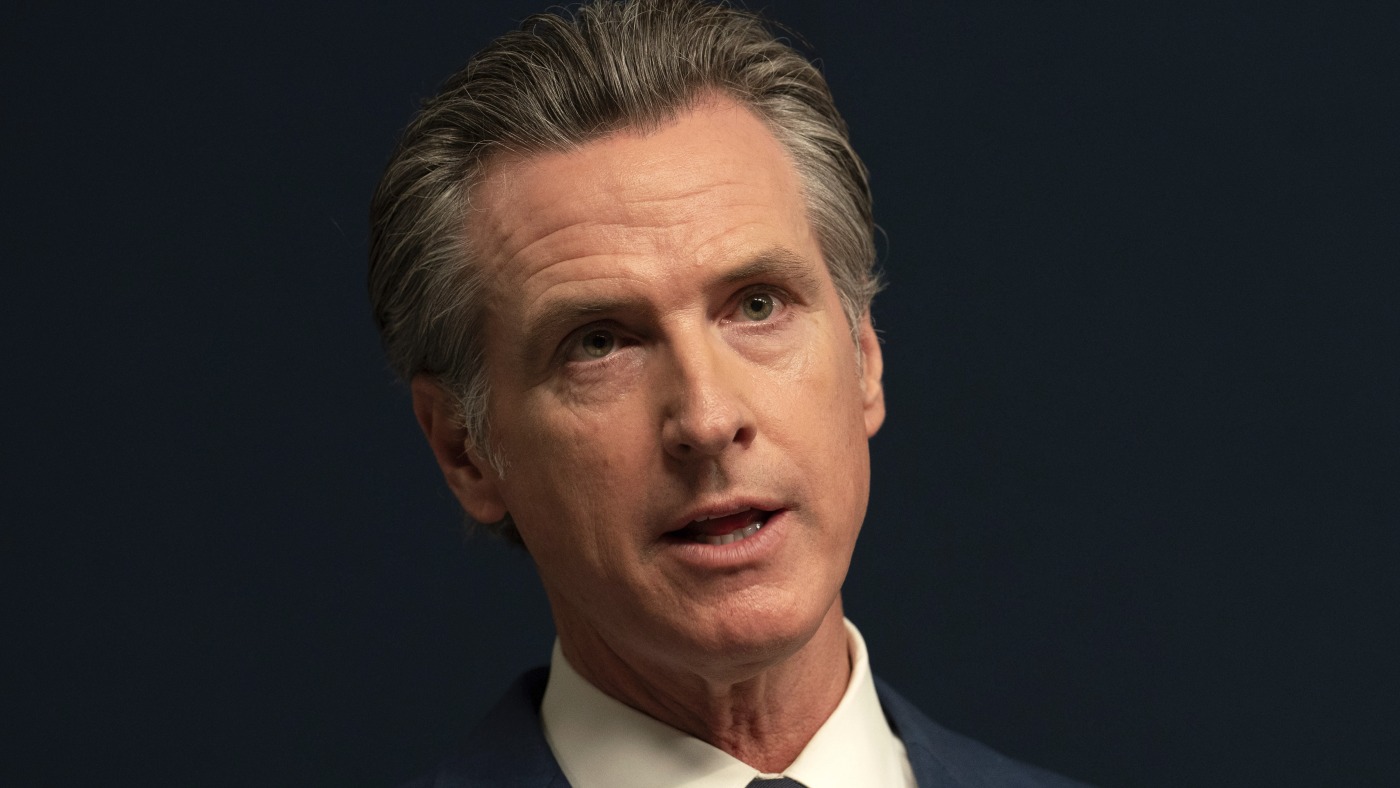

FILE — California Gov. Gavin Newsom vetoed SB1046, a hotly contested measure that would have been the nation’s strictest AI safety law. (AP Photo/Rich Pedroncelli, File)

Rich Pedroncelli/AP/FR171957 AP

hide caption

toggle caption

Rich Pedroncelli/AP/FR171957 AP

Gov. Gavin Newsom of California on Sunday vetoed a bill that would have enacted the nation’s most far-reaching regulations on the booming artificial intelligence industry.

California legislators overwhelmingly passed the bill, called SB 1047, which was seen as a potential blueprint for national AI legislation.

The measure would have made tech companies legally liable for harms caused by AI models. In addition, the bill would have required tech companies to enable a “kill switch” for AI technology in the event the systems were misused or went rogue.

Newsom in a statement described the bill as “well-intentioned,” but noted that its requirements would have called for “stringent” regulations that would have been onerous for the state’s leading artificial intelligence companies.

The bill would also have forced the industry to conduct safety tests on “massively powerful AI models,” according to California Senator Scott Wiener, the bill’s co-author. “Each and every one of the large AI labs has promised to perform tests that SB 1047 requires them to do – the same safety tests that some are now claiming would somehow harm innovation.”

Indeed, many powerful players in Silicon Valley, including venture capital firm Andreessen Horowitz, OpenAI and trade groups representing Google and Meta, lobbied against the bill, arguing it would slow the development of AI and stifle growth for early-stage companies.

“SB 1047 would threaten that growth, slow the pace of innovation, and lead California’s world-class engineers and entrepreneurs to leave the state in search of greater opportunity elsewhere,” OpenAI’s Chief Strategy Officer Jason Kwon wrote in a letter sent last month to Wiener.

Other tech leaders, however, backed the bill, including Elon Musk and pioneering AI scientists like Geoffrey Hinton and Yoshua Bengio, who signed a letter urging Newsom to sign it.

“We believe that the most powerful AI models may soon pose severe risks, such as expanded access to biological weapons and cyberattacks on critical infrastructure. It is feasible and appropriate for frontier AI companies to test whether the most powerful AI models can cause severe harms, and for these companies to implement reasonable safeguards against such risks,” wrote Hinton and dozens of former and current employees of leading AI companies.

Other states, like Colorado and Utah, have enacted laws more narrowly tailored to address how AI could perpetuate bias in employment and health-care decisions, as well as other AI-related consumer protection concerns.

Newsom has recently signed other AI bills into law, including one to crack down on the spread of deepfakes during elections. Another protects actors against their likenesses being replicated by AI without their consent.

As billions of dollars pour into the development of AI, and as it permeates more corners of everyday life, lawmakers in Washington still have not proposed a single piece of federal legislation to protect people from its potential harms, nor to provide oversight of its rapid development.